Solutions

What is TSA Doing Currently to Decrease Their Biases?

In March 2022, TSA announced measurements to implement gender-neutral screening at its checkpoints. TSA is working with the manufacturer on an algorithm update to increase accuracy and efficiency. They defined new standards for screening transgender, nonbinary, and gender-nonconforming airline passengers at TSA checkpoints. The PreCheck program will include an "X" gender marker option as an alternative gender category. TSA announced they will reduce the number of pat-down screenings without compromising security and will be in effect until the new gender-neutral Advanced Imaging Technology (AIT) screening technology is deployed [TSA 2022]. This is a significant stride towards reducing the bias received by gender-marginalized communities. However, there are still many other groups of people affected by these systems that need to be addressed.

In addition, TSA reported to Congress in 2019 that they defined standards to ensure the AIT screening equipment meets "certification standards". Some of these standards include TSA's pre-certification process as well as the partnered organization, DHS Science and Technology Directorate's Transportation Security Lab's, certification testing. They also continue to perform certification testing of new equipment so that TSA can maximize the equipment security effectiveness, operational efficiency, and passenger experience [Homeland Security 2019]. We recognize that it is important to have an outside party evaluate the performance of equipment intended to operate on people. However, most of the additional TSA standards are defined by the organization itself which itself can be problematic.

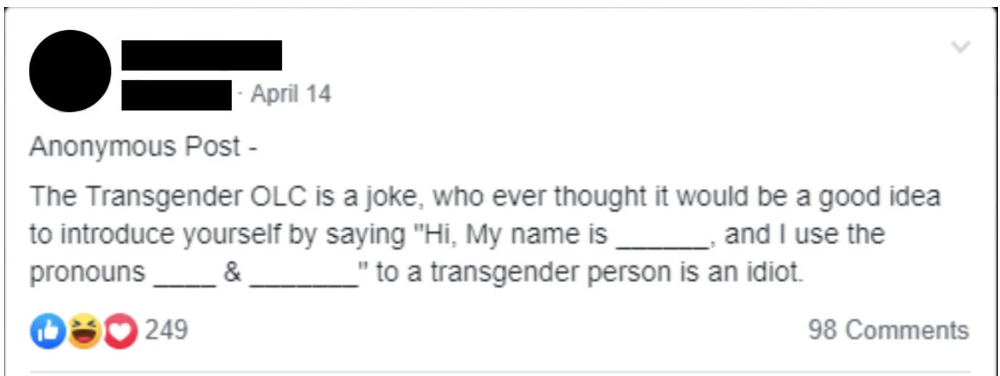

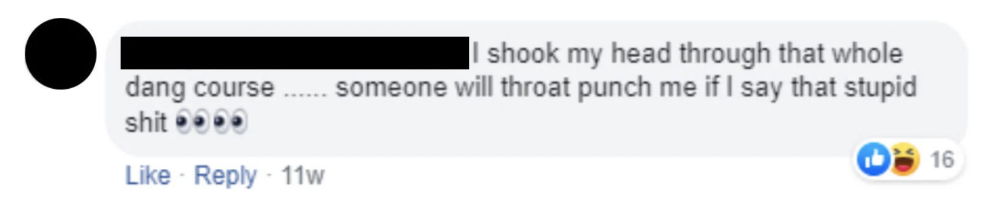

In February of 2019, TSA launched a training program in the Online Learning Center (OLC) by the name of "Transgender Awareness Training" to be completed in thirty minutes by TSA officers and supervisors. The course was meant to teach employees how to interact with members of the transgender community respectfully. To our knowledge, this course did not include how to interact with other marginalized groups. In an private facebook group for TSA employees called the "TSA Breakroom" employees shared their opinions on the course:

In addition, TSA reported to Congress in 2019 that they defined standards to ensure the AIT screening equipment meets "certification standards". Some of these standards include TSA's pre-certification process as well as the partnered organization, DHS Science and Technology Directorate's Transportation Security Lab's, certification testing. They also continue to perform certification testing of new equipment so that TSA can maximize the equipment security effectiveness, operational efficiency, and passenger experience [Homeland Security 2019]. We recognize that it is important to have an outside party evaluate the performance of equipment intended to operate on people. However, most of the additional TSA standards are defined by the organization itself which itself can be problematic.

In February of 2019, TSA launched a training program in the Online Learning Center (OLC) by the name of "Transgender Awareness Training" to be completed in thirty minutes by TSA officers and supervisors. The course was meant to teach employees how to interact with members of the transgender community respectfully. To our knowledge, this course did not include how to interact with other marginalized groups. In an private facebook group for TSA employees called the "TSA Breakroom" employees shared their opinions on the course:

Despite the introduction OTC instructed employees to use being common in the LGBTQ community, TSA employees in the Facebook Group seemed uncomfortable or unwilling to use it. They elaborated saying they felt they would be met with hostility for asking questions regarding gender identity. Further, many identified that this did not address the real issue of having only a male and female option during the screening process. Which one officer noted saying “I got a pink button and blue button. Which one you want?”. From this group, we can see that perhaps this training did not make the impact TSA had hoped in mitigating the bias for these individuals. Now, years later, they are beginning to implement a system with the "X" gender button as aforementioned.

What Could TSA be Doing?

TSA officials agreed that collecting and analyzing data from AIT scanners would provide useful information related to the impact of false alarm rates. However, TSA does not collect or analyze those data at headquarters [GAO 2014].

TSA does not collect or analyze three types of available information that could be used to improve the effectiveness of the scanning system and reduce bias:

TSA does not collect or analyze three types of available information that could be used to improve the effectiveness of the scanning system and reduce bias:

- TSA does not collect or analyze available airport-level Improvised Explosive Device (IED) checkpoint drill data on Security Officer (SO) performance at resolving alarms detected by the AIT system to identify weaknesses and enhance SO performance at resolving alarms at the checkpoint.

- TSA is not analyzing AIT systems’ false alarm rate using data that could help monitor the number of false alarms that occur on AIT systems. This helps keep track of the potential impacts that AIT systems may have on operational costs.

- TSA assesses the overall AIT system performance using test results that do not reflect the combined performance of the technology, the personnel that operate it, and the process that governs AIT-related security operations [GAO 2014].

Our Suggested Solutions

Focus on Algorithm:

First and foremost, we suggest collecting performance data on the AIT systems. The three types of available information detailed in the section above are all available to TSA to collect that can enhance the effectiveness of the scanning system and reduce bias.

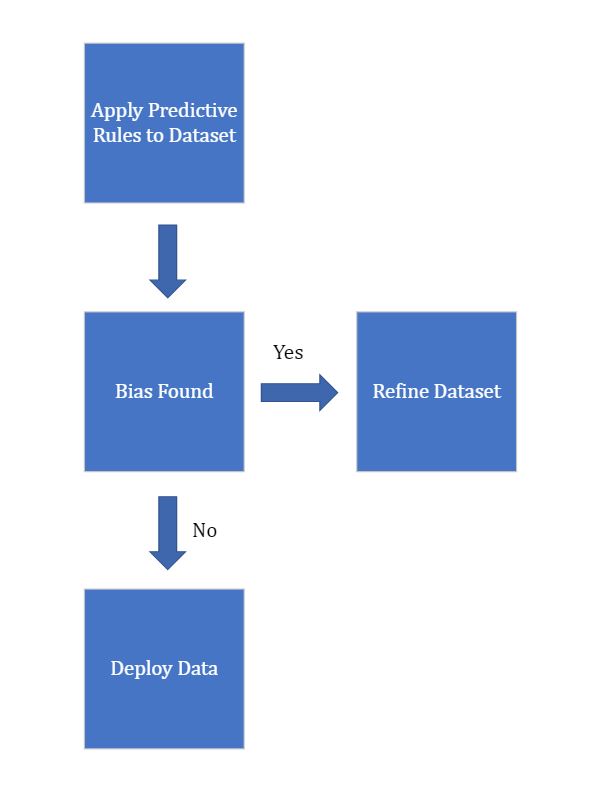

Once the data above is collected, there are several approaches that have been proposed to correct unfair predictive models. The approaches can be grouped into the following categories [Kyriazanos 2019]:

In each case, it is necessary to formalize an unfairness criteria metric in a quantitative way before implementing any of the solutions [Kyriazanos 2019]. The metrics usually follow the rules below:

For reference, we define the following vocabulary:

Based on this, we can formalize the two rules [Kyriazanos 2019].

Let:

Once the data above is collected, there are several approaches that have been proposed to correct unfair predictive models. The approaches can be grouped into the following categories [Kyriazanos 2019]:

- Pre-processing training data to refine bias

- Post-processing based off the model outcomes

- Regulate model by adding constraints during the learning phase in order to reduce bias

In each case, it is necessary to formalize an unfairness criteria metric in a quantitative way before implementing any of the solutions [Kyriazanos 2019]. The metrics usually follow the rules below:

- People similar in terms of non-protected characteristics should receive should receive similar predictions

- Different predictions across groups can only be justified by non-protected characteristics

For reference, we define the following vocabulary:

- Protected characteristics - a personal trait that cannot be used as a reason to discriminate against someone (age, religion, race, disability, language, etc.)

- Non-protected characteristics - a characteristic that anybody can have (education level, economic class, etc.)

Based on this, we can formalize the two rules [Kyriazanos 2019].

Let:

- S: protected characteristics

- X: non-protected characteristics

- y: predicted output

- Predicted output y should be independent of protected characteristics S

E ( y | X,S ) = E ( y | X )

- If non-protected characteristics X is independent to protected characteristics S then the predicted output y should be dependent only on X without being influenced by S

If E ( y | X,S ) ≠ E ( y | X ) then E ( y | X,S ) = e ( y | X ) where e is a constraint that justifies the dependence of y to X without being influenced by the dependence X,S

In order to define e, we can utilize the AIT system's output data defined above and verify with a confidence level, a value that will satisfy the rules above. Additional data that will need to be collected are a passenger's protected and non-protected information. We recommend that this data be self-reported due to its sensitivity.

Once this threshold is defined, it will be easier to categorize data as 'bias' or 'unbias'. From there, algorithm refining can be done in the case where 'bias' data is encountered.

Once this threshold is defined, it will be easier to categorize data as 'bias' or 'unbias'. From there, algorithm refining can be done in the case where 'bias' data is encountered.

Focus on Policy:

Other than an algorithmic approach, we advise that the TSA should operate with less stringent procedures. There is a host of evidence to suggest that the potential threats towards national and international travel are not as significant as the means taken to prevent them. The agency was created to prevent an attack like 9/11 from ever happening again. However, reasearch by KOAA could not find a single documented case of an attempted hijacking in the US since then. In addition, they could not find any instance of the TSA detecting real bombs being brought on planes or any known terrorist [KOAA 2019]. Employing these bias technologies on such a high magnitude only leads to further discrimination against marginalized groups. Further assuming there is an imminent threat at all times can lead security officers to act irrationally with groups more likely to trigger the AIT alarm.

Additionally, we suggest an increased degree of regulation to ensure the effectiveness of these AIT systems. The Administrator of the TSA should clarify which office is responsible for overseeing checkpoint drills and analyzing data from AIT systems for weaknesses in the screening process. By not measuring system effectiveness based on performance, TSA is not resolving anomolies detected by AIT systems. This is information that could improve existing screening technologies that currently operate with bias conclusions.

Additionally, we suggest an increased degree of regulation to ensure the effectiveness of these AIT systems. The Administrator of the TSA should clarify which office is responsible for overseeing checkpoint drills and analyzing data from AIT systems for weaknesses in the screening process. By not measuring system effectiveness based on performance, TSA is not resolving anomolies detected by AIT systems. This is information that could improve existing screening technologies that currently operate with bias conclusions.

Impact

If the screening technologies had less bias, they would send less people to human-conducted pat-down screenings. When security officers perform screenings with any degree of suspicion, they are more likely to be intrusive and disrespectful [Frank 2019]. This can serve to increase negative stereotypes around marginalized groups who are more likely to flag the screening. Further, the negative experiences people have while traveling greatly influence how and when they will travel again in the future. If someone is repeatedly treated inappropriately or without respect during their TSA screening they are unlikely to choose that mode of travel in the future. This then limits the opportunities they have as an individual. Further, if they choose to travel they may be subject to long and invasive screenings that make them miss their flight or be debilitating. By putting a new system in place we would be able to tear down some of the barriers transgender and gender non-conforming individuals face by creating a system that is equitable to passengers from all background. Overall, passengers should not have to feel uncomfortable, unsafe, or violated while traveling.